In the book I am writing on the history of culture wars, I place the late-twentieth-century controversies about race in the context of the larger war for the soul of America. This includes the ongoing debate about affirmative action, which came to the surface in 1978 with Regents of the University of California v. Bakke, the Supreme Court decision that left affirmative action weakened but intact. It also includes the shouting matches over race, poverty, and public policy, intellectual skirmishes carried over from the Moynihan Report conflagration of the 1960s.

In the book I am writing on the history of culture wars, I place the late-twentieth-century controversies about race in the context of the larger war for the soul of America. This includes the ongoing debate about affirmative action, which came to the surface in 1978 with Regents of the University of California v. Bakke, the Supreme Court decision that left affirmative action weakened but intact. It also includes the shouting matches over race, poverty, and public policy, intellectual skirmishes carried over from the Moynihan Report conflagration of the 1960s.

That race helped shape the culture wars is hardly surprising, given the degree to which the nation’s racial landscape had been transformed. Racial politics were persistently perplexing, despite the successes of the civil rights movement, largely because, as President Lyndon Johnson proclaimed in a Howard University speech on June 4, 1965, “equality as a right and a theory” was not the same thing as “equality as a fact and as a result.” In other words, the equal rights codified by the Civil Rights Act of 1964 did not entail actual equality between the races. This fact was made horrifyingly apparent by the numerous riots that plagued American cities in the 1960s, beginning with the riot that exploded in the predominantly black Watts neighborhood of Los Angeles in August of 1965, resulting in 34 deaths, thousands of injuries, and untold millions in property damage. That this riot occurred only a few days after the Voting Rights Act outlawed discriminatory voting practices highlighted the vast discrepancy between equality as a right and equality as a fact.

American intellectuals mostly agreed that post-civil rights America was not post-racial; that racial disparities persisted. But they often vehemently disagreed about how to diagnose and solve the array of problems stemming from this fact. By the 1980s, such disagreements became more pointed. Liberal stalwarts such as sociologist Frances Fox Piven continued to argue for a more robust welfare state, but could no longer expect to dominate the national conversation, due to newly created space for conservative policy intellectuals such as American Enterprise Institute Fellow Charles Murray. Author of the 1984 bestseller Losing Ground, which became something of a policy manual for the Reagan administration, and which Daniel Rodgers positions as one of the more odious representations of the microeconomic contagion that defined the “Age of Fracture,” Murray argued that expensive Great Society programs designed to alleviate poverty actually resulted in increased poverty, the unintended consequence of the ironic incentives built into welfare policy. According to Murray, after calculating the costs and benefits of marrying and seeking employment, a poor couple, whom he imagined as rational economic actors “Harold” and “Phyllis,” would have concluded that it made more sense to remain unmarried and on welfare. Rodgers crisply summarizes the implications of Murray’s contention: “The cure constructed the disease and fed on its own perverse failures.” The seductiveness of Murray’s microeconomic solution was obvious, especially in an era defined by austerity: doing less was both inexpensive and achieved a better result. At a time when urban poverty seemed more and more intractable, and more and more linked to racial inequality—evident in the racialized discourse of the so-called “underclass”—Murray’s “benign neglect” approach proved salient, particularly since the electorate was impatient with political measures that appeared to benefit blacks. It is no surprise, then, that Losing Ground helped lay the foundation for Clinton’s “end welfare as we know it” legislation.

Author of the 1984 bestseller Losing Ground, which became something of a policy manual for the Reagan administration, and which Daniel Rodgers positions as one of the more odious representations of the microeconomic contagion that defined the “Age of Fracture,” Murray argued that expensive Great Society programs designed to alleviate poverty actually resulted in increased poverty, the unintended consequence of the ironic incentives built into welfare policy. According to Murray, after calculating the costs and benefits of marrying and seeking employment, a poor couple, whom he imagined as rational economic actors “Harold” and “Phyllis,” would have concluded that it made more sense to remain unmarried and on welfare. Rodgers crisply summarizes the implications of Murray’s contention: “The cure constructed the disease and fed on its own perverse failures.” The seductiveness of Murray’s microeconomic solution was obvious, especially in an era defined by austerity: doing less was both inexpensive and achieved a better result. At a time when urban poverty seemed more and more intractable, and more and more linked to racial inequality—evident in the racialized discourse of the so-called “underclass”—Murray’s “benign neglect” approach proved salient, particularly since the electorate was impatient with political measures that appeared to benefit blacks. It is no surprise, then, that Losing Ground helped lay the foundation for Clinton’s “end welfare as we know it” legislation.

The intellectual history of the so-called underclass reveals the centrality of race to the highly contentious struggle to define a normative America.  Racial liberals like Piven sought a more empathetic nation, one more willing to make sacrifices for the victims of persistent forms of institutional racism, in part, by expanding the welfare state. But a growing number of Americans, Charles Murray’s audience, believed that welfare, or government handouts, were an affront to those traits that supposedly made America great, namely hard work and individual responsibility. This conservative view, always present in American social thought, became increasingly popular after federal law was redesigned to prevent discrimination. This highlights one of the unintended consequences of civil rights legislation: racial disparities could no longer easily be blamed on racism.

Racial liberals like Piven sought a more empathetic nation, one more willing to make sacrifices for the victims of persistent forms of institutional racism, in part, by expanding the welfare state. But a growing number of Americans, Charles Murray’s audience, believed that welfare, or government handouts, were an affront to those traits that supposedly made America great, namely hard work and individual responsibility. This conservative view, always present in American social thought, became increasingly popular after federal law was redesigned to prevent discrimination. This highlights one of the unintended consequences of civil rights legislation: racial disparities could no longer easily be blamed on racism.

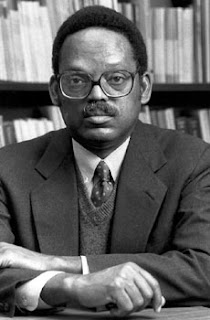

In this post-civil rights context, alternative explanations for the continued presence of an underclass proliferated. William Julius Wilson’s 1987 book The Truly Disadvantaged was one of the more discussed. Wilson, a liberal sociologist then at the University of Chicago, couched his book as an explicit effort to retake the debate from conservatives like Charles Murray.

As such, he partly attributed the existence of an underclass to economic factors such as deindustrialization, which resulted in joblessness for many city inhabitants. But Wilson also dedicated several chapters to a candid analysis of “the social pathologies of the inner city,” hardly a departure from Murray. Ghetto dwellers, for Wilson, were hemmed in by a vicious cycle that perpetuated the usual litany of pathologies, including illegitimacy and crime. Wilson’s book is better remembered for its gratuitous focus on the pathological behavior of the underclass than for its economic diagnosis of urban underemployment. As such, The Truly Disadvantaged failed to advance the national discussion of the underclass beyond Losing Ground.

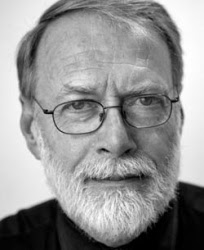

Murray, on the other hand, did advance the discussion, beyond even his own neoconservative framework. Joined by Harvard psychometrician Richard Herrnstein, he offered a new version of a much older social Darwinist framework in the1994 bestseller, The Bell Curve.  Murray and Herrnstein contended that the underclass existed due to a gap in cognitive ability. Dull people, those with a low IQ—which they stridently defended as an unbiased measurement—were likelier to be poor and dysfunctional. Moreover, Murray and Herrnstein argued that IQ was mostly genetic and that blacks as an ethnic group were inherently duller than whites. Soon after publication, more than mere book, The Bell Curve became a phenomenon. Dissected in most major national publications, pundits of every ideological stripe weighed in on the national debate. Most liberals denounced the book. The New York Times columnist Bob Herbert labeled The Bell Curve “a scabrous piece of racial pornography masquerading as serious scholarship.” Most conservatives, even those who sought to distance themselves from The Bell Curve’s more odious conclusions, rushed to Murray and Herrnstein’s defense and attributed the controversy to the “political correctness” of their liberal critics.

Murray and Herrnstein contended that the underclass existed due to a gap in cognitive ability. Dull people, those with a low IQ—which they stridently defended as an unbiased measurement—were likelier to be poor and dysfunctional. Moreover, Murray and Herrnstein argued that IQ was mostly genetic and that blacks as an ethnic group were inherently duller than whites. Soon after publication, more than mere book, The Bell Curve became a phenomenon. Dissected in most major national publications, pundits of every ideological stripe weighed in on the national debate. Most liberals denounced the book. The New York Times columnist Bob Herbert labeled The Bell Curve “a scabrous piece of racial pornography masquerading as serious scholarship.” Most conservatives, even those who sought to distance themselves from The Bell Curve’s more odious conclusions, rushed to Murray and Herrnstein’s defense and attributed the controversy to the “political correctness” of their liberal critics.

Post-civil rights intellectual ferment also reshaped social thought on the left. A variety of left-leaning academics innovated theoretical approaches—new schools of thought—to explain the persistence of racial disparities in post-civil rights America. One prominent example is Critical Race Theory (CRT), which originated in the 1980s. (I’ve been enmeshed in CRT primary sources for weeks now, in preparation for an AHA paper on the topic—if you’re in Chicago next month, stop by our panel, titled, “Black Ivy: African-American Intellectuals During the Twentieth Century”). In terms of intellectual trajectories, CRT emerged as one of the stiffest challenges to conventional late-twentieth-century legal thought. Its theorists, lead among them its founding thinker, Derrick Bell (who died recently–see his obituary), drew upon older anthropological notions about the social construction of race to explain how the American legal system was complicit in the persistence—or more ominously, the permanence—of American racism. In the paper I will be giving at the AHA, I argue that Bell’s experiences at Harvard University influenced his pessimistic rendering of American law and society.

Harvard Law School hired Bell in 1969 to placate black student protests that were part of a nationwide movement to make universities more amenable to minority students. In 1971, Bell became the first African American to gain tenure at Harvard Law. Bell’s courses on law and race were legion among students, as was his famous casebook, Race, Racism, and American Law (1973). When he left Harvard in 1980 to become the dean of law at the University of Oregon, students organized protests to compel administration to replace him with a minority professor. When Harvard administrators refused, students held their own course, where they continued to read Bell’s casebook. CRT emerged out of this extracurricular course, populated by such foundational CRT thinkers as Kimberlé Williams Crenshaw. Bell returned to Harvard in 1986 for another four stormy years. In 1990, when Bell threatened to remain on unpaid leave until the law school hired a woman of color, Harvard fired Bell. Out of these experiences at Harvard, Bell and others innovated the scholarship that formed CRT. They took stock of how racism manifested in a supposedly colorblind institutional setting that operated much like the legal system. Just as Bell critiqued the hidden biases of the so-called Harvard meritocracy, he also leveled a sustained scholarly analysis of, in Cornel West’s words, “the historical centrality and complicity of law in upholding white supremacy.”

CRT emerged in a moment of despair. The civil rights movement narrative—grounded in the belief that the United States was progressing beyond racial discrimination, in its belief in the ability of the legal system to redress discrimination—foundered on the rocky shoals of the Reagan era. Reagan codified the growing disenchantment with civil rights efforts by redirecting the Civil Rights Division of the Justice Department, under Deputy Attorney General William Bradford Reynolds, away from ameliorative efforts such as affirmative action. CRT sought to theorize how and why racial disparities persisted, and in some ways worsened, despite the many legal victories of the civil rights era. CRT openly questioned the traditional civil rights premise that racial progress was possible in the United States, a pessimistic outlook that formed much of Derrick Bell’s late work, such as his brilliantly provocative book, Faces at the Bottom of the Well, subtitled, pointedly, “The Permanence of Racism.” In that 1992 book, Bell eloquently gave life to the more academic body of CRT scholarship that had shaken up the prestigious law journals during the 1980s. An excerpt:

In that 1992 book, Bell eloquently gave life to the more academic body of CRT scholarship that had shaken up the prestigious law journals during the 1980s. An excerpt:

On the one hand, contemporary color barriers are certainly less visible as a result of our successful efforts to strip the law’s endorsement from the hated Jim Crow signs. Today one can travel for thousands of miles across the country and never see a public facility designated as ‘Colored’ or ‘White.’ Indeed, the very absence of visible signs of discrimination creates an atmosphere of racial neutrality and encourages whites to believe that racism is a thing of the past. On the other hand, the general use of so-called neutral standards to continue exclusionary practices reduces the effectiveness of traditional civil rights laws, while rendering discriminatory actions more oppressive than ever… Today, because bias is masked in unofficial practices and ‘neutral’ standards, we must wrestle with the question whether race or some individual failing has cost us the job, denied us the promotion, or promoted our being rejected as tenants for an apartment. Either conclusion breeds frustration and alienation—and a rage we dare not show to others or admit to ourselves.

I argue that CRT and its many offspring, such as whiteness studies, grew to be the dominant intellectual mode of thinking about race in post-civil rights America. In this way, it was the central oppositional formation to the neoconservative, post-welfare state, colorblind discourse, though the latter obviously had far more influence over policy. Agree or disagree, intellectuals who thought about race had to reckon with Critical Race Theory and with neoconservatism, one overly focused on race and racism to the exclusion of most any other form of social analysis, the other overly quiet on race and racism as to make one wonder if race wasn’t also the main point. But in making this argument about the bifurcated discourse of race in post-civil rights America, I do not wish to lose sight of alternative modes of analysis, roads not taken, so to speak.

One alternative mode of thinking through race that influenced intellectual history and some circles of literary study is cosmopolitanism.  Cosmopolitan thinkers, especially Henry Louis Gates, Jr., sought to more forcefully challenge the ontology of race than did CRT thinkers. It’s not that CRT thinkers believed race ontologically “real,” rather, they fought hard to keep racial analysis, as a dichotomous black-white discourse, at the forefront, in the realist assumption that racism was the single most important foundation of American social stability and thus resistance to it demanded a focus on it. Cosmopolitan thinkers de-emphasized dichotomies and accentuated the amorphousness of racial constructs. Race for them was performative, as was gender for Judith Butler.

Cosmopolitan thinkers, especially Henry Louis Gates, Jr., sought to more forcefully challenge the ontology of race than did CRT thinkers. It’s not that CRT thinkers believed race ontologically “real,” rather, they fought hard to keep racial analysis, as a dichotomous black-white discourse, at the forefront, in the realist assumption that racism was the single most important foundation of American social stability and thus resistance to it demanded a focus on it. Cosmopolitan thinkers de-emphasized dichotomies and accentuated the amorphousness of racial constructs. Race for them was performative, as was gender for Judith Butler.

I wrote a chapter for the forthcoming Routledge Handbook of Cosmopolitan Studies where I implicitly argue that cosmopolitanism has not had much influence over racial thought in the Unites States. But it has influenced U.S. intellectual history, thanks in no small part to David Hollinger.  His many interventions represent a cosmopolitan exploration of the ways in which our solidarities and identities—racial, religious, and national—govern our lives. In his now standard work, Postethnic America, Hollinger focuses on how solidarities and identities should ideally operate according to the principles of “affiliation by revocable consent.” In other words, he seeks to transcend the stultifying debates about multiculturalism, the players in which assume identities to be rooted in blood and immutable culture, to embrace more individualistic and voluntary conceptions of identity. However, in Hollinger’s follow-up to Postethnic, titled Cosmopolitanism and Solidarity, he emphasizes the less voluntary structures of solidarity, what he terms a “political economy of solidarity.” Solidarity, for him, “is a commodity distributed by authority,” especially when tied to the nation state. “Central to the history of nationalism, after all, has been the use of state power to establish certain ‘identities,’ understood as performative, and thus creating social cohesion on certain terms rather than others.” I would guess that the more tempered arguments made in Cosmopolitanism and Solidarity resulted from the criticism Hollinger might have received after Postethnic America from those influence by the CRT mode of analyzing race, where black-white relations might not be immutable by blood, but certainly are not very mutable by American cultural standards.

His many interventions represent a cosmopolitan exploration of the ways in which our solidarities and identities—racial, religious, and national—govern our lives. In his now standard work, Postethnic America, Hollinger focuses on how solidarities and identities should ideally operate according to the principles of “affiliation by revocable consent.” In other words, he seeks to transcend the stultifying debates about multiculturalism, the players in which assume identities to be rooted in blood and immutable culture, to embrace more individualistic and voluntary conceptions of identity. However, in Hollinger’s follow-up to Postethnic, titled Cosmopolitanism and Solidarity, he emphasizes the less voluntary structures of solidarity, what he terms a “political economy of solidarity.” Solidarity, for him, “is a commodity distributed by authority,” especially when tied to the nation state. “Central to the history of nationalism, after all, has been the use of state power to establish certain ‘identities,’ understood as performative, and thus creating social cohesion on certain terms rather than others.” I would guess that the more tempered arguments made in Cosmopolitanism and Solidarity resulted from the criticism Hollinger might have received after Postethnic America from those influence by the CRT mode of analyzing race, where black-white relations might not be immutable by blood, but certainly are not very mutable by American cultural standards.

What other modes of analysis grew out of post-civil rights intellectual ferment? I am genuinely interested in reader comments here. One mode that is perhaps my favorite, though it is less influential than all of the others I describe above, is the class-based analysis of race articulated by Adolph Reed, Jr. Reed’s analysis is, like CRT, pessimistic, but in much different fashion. Although Reed is hardly a post-racial thinker along the lines of the neoconservatives—he continues to analyze the ways in which racial inequality persists—he believes that class analysis is the best mode of thinking through such inequality in a post-civil rights context. In his fabulous 1999 book, Stirrings in the Jug: Black Politics in the Post-Segregation Era, Reed unsparingly critiques the ways in which civil rights professionals legitimized black political leaders, especially the new black mayors of cities like Atlanta, allowing these black politicians to further neoliberal policies that did great harm to the majority of their black constituents. Situated as such, Reed has been more critical of Obama than perhaps anybody else on the left, even before Obama won the presidency, calling him a “vacuous opportunist” in a May 2008 Progressive article. More recently, Reed talks about the “limits of anti-racism,” which seems to take his mode of thinking even further afield from CRT and mainstream liberal-left discourse on race.

Some snippets:

The contemporary discourse of “antiracism” is focused much more on taxonomy than politics. It emphasizes the name by which we should call some strains of inequality—whether they should be broadly recognized as evidence of “racism”— over specifying the mechanisms that produce them or even the steps that can be taken to combat them. And, no, neither “overcoming racism” nor “rejecting whiteness” qualifies as such a step any more than does waiting for the “revolution” or urging God’s heavenly intervention. If organizing a rally against racism seems at present to be a more substantive political act than attending a prayer vigil for world peace, that’s only because contemporary antiracist activists understand themselves to be employing the same tactics and pursuing the same ends as their predecessors in the period of high insurgency in the struggle against racial segregation.

…

Ironically, as the basis for a politics, antiracism seems to reflect, several generations downstream, the victory of the postwar psychologists in depoliticizing the critique of racial injustice by shifting its focus from the social structures that generate and reproduce racial inequality to an ultimately individual, and ahistorical, domain of “prejudice” or “intolerance.”

…

All too often, “racism” is the subject of sentences that imply intentional activity or is characterized as an autonomous “force.” In this kind of formulation, “racism,” a conceptual abstraction, is imagined as a material entity. Abstractions can be useful, but they shouldn’t be given independent life.

….

I can appreciate such formulations as transient political rhetoric; hyperbolic claims made in order to draw attention and galvanize opinion against some particular injustice. But as the basis for social interpretation, and particularly interpretation directed toward strategic political action, they are useless. Their principal function is to feel good and tastily righteous in the mouths of those who propound them. People do things that reproduce patterns of racialized inequality, sometimes with self-consciously bigoted motives, sometimes not. Properly speaking, however, “racism” itself doesn’t do anything more than the Easter Bunny does.

My position is—and I can’t count the number of times I’ve said this bluntly, yet to no avail, in response to those in blissful thrall of the comforting Manicheanism—that of course racism persists, in all the disparate, often unrelated kinds of social relations and “attitudes” that are characteristically lumped together under that rubric, but from the standpoint of trying to figure out how to combat even what most of us would agree is racial inequality and injustice, that acknowledgement and $2.25 will get me a ride on the subway. It doesn’t lend itself to any particular action except more taxonomic argument about what counts as racism.

6 Thoughts on this Post

S-USIH Comment Policy

We ask that those who participate in the discussions generated in the Comments section do so with the same decorum as they would in any other academic setting or context. Since the USIH bloggers write under our real names, we would prefer that our commenters also identify themselves by their real name. As our primary goal is to stimulate and engage in fruitful and productive discussion, ad hominem attacks (personal or professional), unnecessary insults, and/or mean-spiritedness have no place in the USIH Blog’s Comments section. Therefore, we reserve the right to remove any comments that contain any of the above and/or are not intended to further the discussion of the topic of the post. We welcome suggestions for corrections to any of our posts. As the official blog of the Society of US Intellectual History, we hope to foster a diverse community of scholars and readers who engage with one another in discussions of US intellectual history, broadly understood.

Great phrase: “the war for the soul of America.”

Current battle being fought on Wall Street.

ShellY: Unfortunately, I can’t take credit for that phrase. It’s taken from Pat Buchanan’s infamous 1992 Republican Convention speech, where he pronounced the culture wars. But I like it so much it’s the title of my book-in-progress.

Thanks Andrew. You’ve given me much to think about.

I wonder what you think of Toure’s new book on “Post-Blackness”–does that represent cosmopolitanism in a new formulation or yet another discussion of race post-civil rights?

I also wonder about the after-effects of Cosby and Oprah on liberals. I don’t think they can be classified purely as producers of and/or influences on color-blind white folks, but they certainly had an influence. ooooh, there is always so much more I want to know about the world.

Also, have you seen the debate between Andrew Sullivan and Ta-Nehisi Coates on IQ? I only read Coates’ response, but it was powerful.

http://www.theatlantic.com/national/archive/2011/11/the-race-iq-blackout/249105/

I’ve been reading Jeremy Varon’s “Bringing the War Home,” about the Weather Underground and the RAF, and was struck by the degree to which the violence, the will to violence, of Weatherman, was driven not by a desire to emulate the Black Panthers, but in solidaristic (perhaps misguided) reaction to the violence of the state against the Panthers. I’m just beginning to read here, so perhaps my surprise is naive.

It does seem to me, though, that a story of post-civil rights discussions about race should perhaps begin with this. For Varon, the WU should be understood in no small measure as attacking their own white privilege by embracing, seeking out, the state violence to which non-whites were routinely subject. It was a kind of ethical decision about radical solidarity, also made in reference to the Vietnamese. Whatever we might think about the political or moral value of that decision, surely it is the case that it failed, and so that the basis of the kind of change in american society that Rodgers, for instance, discusses, was the impossibility of such solidarity?

Perhaps the debates of the 1980s, at least in their limit positions, can be illuminated by the radicalism of an earlier time? For instance, this objectification, or personalization, of racism that Reed attacks–that is, the attitude that racism has got to be in someone’s mind or written on a wall somewhere to be effective–this is in a certain sense a reversal of some of the personalization, ethicization, of politics carried out by the WU. Perhaps? Perhaps the WU can be said to have been waging a war for the soul of America?

Maybe this is part of your plan all along, but I mention it now because it seems here that for you the post civil rights era really begins in the late 1970s–is this standard? is it because of the court case you mention at the beginning?

all of which is to say, very interesting post.

Gwen Ifill discusses “Post-Blackness” with Toure’ at the Aspen Institute:

http://www.aspeninstitute.org/about/blog/post-blackness

Thanks for the comments Lauren and Eric. Sorry I’m so late to return to the discussion, but the end of the semester is a crazy busy time.

Lauren, thanks for the references. I haven’t read Touré, but I assume he’s coming from a cosmopolitan perspective. My thoughts on Oprah are that she nicely represents the stripped down version of multiculturalism that works so well alongside neoliberalism (I feel like I’ve written about that topic at this blog dozens of times). Check out this book: Janice Peck, “The Age of Oprah: Cultural Icon for the Neoliberal Era.” Cosby might work a little different–he seems to harken back to Booker T.

Eric: I think Varon is precisely correct about what motivated the late white far New left. Dan Berger makes the same argument in his book about the Weather Underground, “Outlaws in America,” which I reviewed here.

Much of the 1970s discourse among left teachers and academics is rooted in a sort of attacking white privilege way, as you suggest. So you are right on target. Thanks.